The Silicon Shift

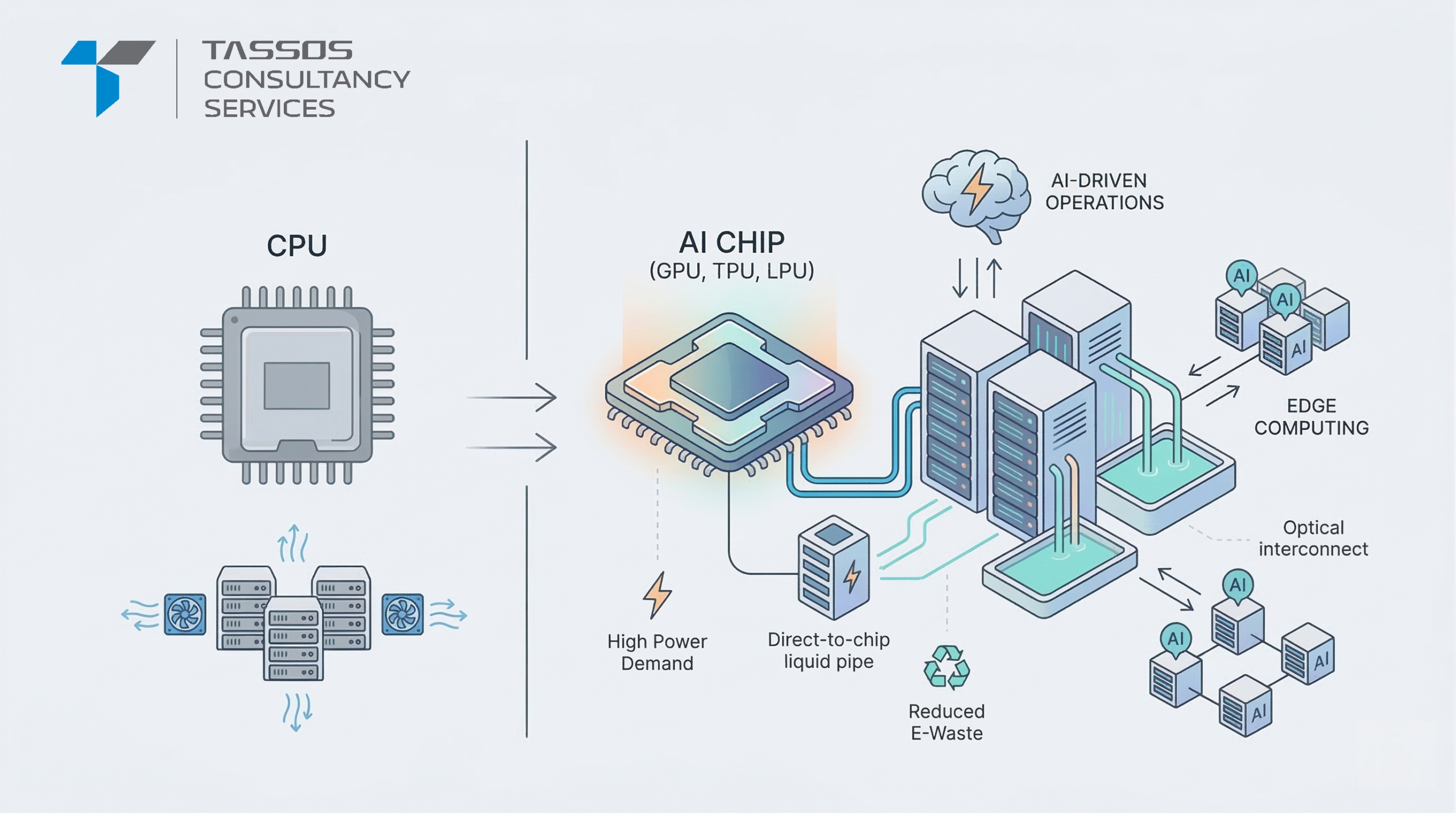

Something big is happening inside the buildings that power the internet. For decades, data centers — the giant warehouses full of servers that store and process information — were built around one type of chip: the CPU, or Central Processing Unit. But thanks to the rise of AI (Artificial Intelligence), that’s all changing. We’re in the middle of the biggest shift in data center history, and AI chips are at the heart of it.

This article explains what’s changing, why it matters, and what the future looks like — in plain, easy-to-understand language.

Is the CPU Era Coming to an End?

Think of a CPU like a very smart person who can handle many different tasks — but only a few at a time. For regular computing tasks, that’s perfectly fine. But AI works differently. Training an AI model requires doing billions of math calculations all at once, simultaneously. A CPU simply can’t keep up with that.

That’s where AI chips come in. Instead of one very smart processor, AI chips have thousands of smaller processors working at the same time. This approach — called parallel processing — is what makes AI possible at scale.

There are three main types of AI chips you’ll hear about:

- GPU (Graphics Processing Unit) — Originally made for video games, now the go-to chip for AI.

- TPU (Tensor Processing Unit) — Google’s custom chip designed specifically for AI tasks.

- LPU (Language Processing Unit) — A newer chip built especially for running large language models (like ChatGPT or Claude).

The shift to AI chips isn’t just about replacing one component with another — it forces data centers to rethink everything, including how they use power, stay cool, and connect machines together.

1. The Heat Problem: Power and Cooling

AI chips are incredibly powerful — and that power comes at a cost: heat. A traditional server rack uses about 10–15 kilowatts of electricity. An AI-powered rack can use 100 kilowatts or more. That’s the same as running 40–50 household microwaves at the same time, in a space no bigger than a wardrobe.

For fifty years, data centers have used fans and air conditioning to keep things cool. But AI chips generate so much heat so quickly that air cooling simply isn’t enough anymore. The industry is moving to two newer methods:

- Direct-to-Chip Cooling: Liquid coolant flows through pipes directly onto the chip’s surface, absorbing heat much more efficiently than air.

- Immersion Cooling: Entire servers are submerged in a special non-conductive (safe, non-electrical) liquid. This liquid absorbs heat up to 1,000 times better than air. It sounds extreme, but it works — and it’s becoming more common for heavy AI workloads.

2. Speed Matters: Better Networking

When you’re training an AI model across thousands of chips at once, those chips need to talk to each other — fast. Think of it like a relay race where every runner needs to pass the baton perfectly. If communication is slow or drops out, the whole thing falls apart.

Standard internet-style connections (called Ethernet) aren’t reliable enough for this. Data centers are upgrading to:

- InfiniBand and Proprietary Fabrics: Super-fast, low-delay connections that let thousands of chips work together like a single giant brain.

- Optical Interconnects: Using light (fiber optic cables) instead of electricity (copper wires) to send data, even over very short distances inside the data center. Light travels faster and can carry much more data.

3. Bringing AI Closer to You: Edge Computing

Right now, when you use an AI tool, your request travels to a massive data center somewhere, gets processed, and the answer comes back to you. For most uses, this takes only a second or two — which is fine.

But for some applications, even a one-second delay is too slow. Imagine a self-driving car or a robot performing surgery. These systems need AI responses in under 10 milliseconds — that’s faster than the blink of an eye. Sending data to a distant data center and back simply won’t cut it.

The solution is “edge computing” — placing smaller data centers closer to where data is being created (like in a city, a factory, or a hospital). These “Micro-Data Centers” are equipped with specialized AI chips that process data locally and only send useful summaries back to the main cloud. This keeps things fast, local, and efficient.

4. Big Tech Builds Its Own Chips

Buying AI chips off the shelf — mainly from companies like NVIDIA — is very expensive. So the biggest tech companies have started designing their own custom chips, built specifically for their own software.

- Amazon Web Services (AWS) has its own chip called Trainium, designed for training AI models.

- Google has its TPU (Tensor Processing Unit), used to power everything from Google Search to Google Translate.

This creates an interesting situation for businesses. A company might use one cloud provider to train an AI model (because that provider’s custom chip does it cheaply), and a completely different cloud provider to run that AI model for users (because of their wider global reach). This is called a multi-cloud strategy, and it’s becoming the new normal.

5. The Challenges That Come With Change

This transformation isn’t without its difficulties. Three major challenges stand out:

- Power Grid Strain: AI data centers use so much electricity that they’re putting serious pressure on local power grids. Some regions are struggling to supply enough electricity to keep up.

- Chip Shortages: The most advanced chips (built at the 3-nanometer scale — smaller than a virus) are in very high demand. Sometimes, companies have the money to build new data centers but simply can’t get enough chips to fill them.

- E-Waste: AI chips become outdated every 18 to 24 months, which means huge amounts of discarded hardware. This creates a growing environmental problem that the industry is still figuring out how to address.

The Future: Data Centers That Run Themselves

Here’s a fascinating twist: AI is now being used to manage the very data centers that run AI. In 2026, AI systems are monitoring chip temperatures, predicting when hardware is about to fail, and automatically moving computing tasks to locations where electricity is cheapest or comes from renewable sources (like solar or wind).

The goal is what experts call a “Self-Optimizing Data Center” — a facility that doesn’t need constant human management because it monitors, adapts, and fixes itself automatically. It’s not fully here yet, but we’re getting very close.

Conclusion

AI chips have moved far beyond being simple components. They are now reshaping the entire physical world of computing — from how buildings are cooled, to how electricity grids are used, to how companies make strategic decisions about their technology.

We are living through a foundational shift in technology infrastructure. The companies and organisations that understand and adapt to this silicon-driven transformation won’t just survive — they will define the next era of the digital world.

The age of AI infrastructure has begun. And it’s being built one chip at a time.

Keyword :

AI chip data center transformation, specialized AI hardware, GPU data center infrastructure, TPU vs GPU performance, AI workload optimization, data center liquid cooling, high-density server racks, NPU integration, AI accelerators, enterprise AI infrastructure, hyperscale data center trends, green AI computing, power density in data centers, AI infrastructure scalability, semiconductor innovation 2026, neural processing units, data center cooling solutions, AI-driven networking, InfiniBand vs Ethernet for AI, H100 infrastructure requirements, Blackwell architecture impact, custom AI silicon, AWS Inferentia, Google TPU v5, Microsoft Maia, AI model training hardware, inference at the edge, data center power management, sustainable AI infrastructure, AI cluster orchestration, modular data centers, colocation for AI, low latency AI networking, optical interconnects, memory bandwidth bottlenecks, HBM3 technology, AI hardware lifecycle, rack-level power distribution, liquid-to-chip cooling, thermal design power (TDP), AI hardware startups, cloud service provider AI chips, sovereign AI infrastructure, edge AI nodes, real-time AI processing, predictive maintenance for data centers, automated data center management, AI storage solutions, NVMe over Fabrics for AI, high-performance computing (HPC) convergence.

0 Comments